Foresight Ventures: AI+Crypto Ultimate Report V1

“AI is still one of the tracks that deserves the most attention and has the greatest opportunities in Web3, and this logic will definitely not change.”

Written by: Ian, Foresight Ventures

TL;DR

After months of delving into the field of combining AI and cryptocurrencies, the understanding of this direction is even deeper. This article conducts a comparative analysis of early views and the current track trend. Friends who are familiar with the track can start reading from the second section.

- Decentralized computing power network: Facing the challenges of market demand, special emphasis is placed on the ultimate goal of decentralization is to reduce costs. The community attributes and tokens of Web3 bring value that cannot be ignored, but it is still an added value to the computing power track itself, rather than a disruptive change. The focus is to find a way to combine it with user needs, rather than blindly Decentralized computing power network serves as a supplement to the lack of centralized computing power.

- AI Market: Discussed the concept of a full-link financial AI market, the value brought by the community and tokens and their vital importance. Such a market not only focuses on the underlying computing power and data, but also includes the model itself and related applications. Model financialization is a core element of the AI market. On the one hand, it attracts users to directly participate in the value creation process of AI models, and on the other hand, it creates demand for underlying computing power and data.

- Onchain AI and ZKML face dual challenges of demand and supply, while OPML provides a more balanced solution in cost and efficiency. Although OPML is a technological innovation, it may not solve the fundamental challenge faced by on-chain AI, that is, there is no demand.

- In the application layer, most web3 AI application projects are too naive. The more reasonable point of AI application is to enhance user experience and improve development efficiency, or to serve as an important part of the AI market.

1. AI track review

In the past few months, I have conducted in-depth research on the topic of AI + crypto. After several months of accumulation, I am very happy that I have gained insight into the direction of some tracks at an early stage, but I can also see that there are some trends that are now in sight. This is not an accurate view.

**This article only talks about opinions and does not give an intro. **It will cover several general directions of AI in web3 and show my previous and current opinions and analysis on the track. Different perspectives may have different inspirations, which can be viewed comparatively and dialectically.

Let’s first review the main directions of AI + crypto set in the first half of the year:

1.1 Distributed computing power

In “A rational look at the decentralized computing power network”, based on the general logic that computing power will become the most valuable resource in the future, the value that crypto can give to the computing power network is analyzed.

Although decentralized distributed computing power networks have the greatest demand for large AI model training, they also face the greatest challenges and technical bottlenecks. Including complex data synchronization and network optimization issues. In addition, data privacy and security are important constraints. Although there are some existing,techniques that can provide preliminary solutions, in large-scale,distributed training tasks, these techniques are still impractical,due to the huge computational and communication overhead. Obviously, decentralized distributed computing power networks have a better chance of being implemented in model reasoning, and there is enough room for predicting future increments. But it also faces challenges such as communication delay, data privacy, and model security. Compared with model training, the computational complexity and data interactivity during inference are lower, and it is more suitable to be performed in a distributed environment.

1.2 Decentralized AI Market

In “The best attempt at decentralized AI Marketplace”, it is mentioned that a successful decentralized AI marketplace needs to closely combine the advantages of AI and Web3, and use distribution, asset confirmation, income distribution and decentralization. The added value of centralized computing power lowers the threshold for AI applications, encourages developers to upload and share models, while protecting users’ data privacy rights, and building an AI resource trading and sharing platform that is developer-friendly and meets user needs.

The idea at the time (and it may not be entirely accurate now) was that a data-based AI marketplace had greater potential. A marketplace that relies exclusively on models needs the support of a large number of high-quality models, but early platforms lack user base and high-quality resources, which makes it difficult for excellent model providers to attract high-quality models. Markets based on data are decentralized and distributed. Collection, incentive layer design and data ownership guarantee can accumulate a large amount of valuable data and resources, especially private domain data.

The success of the decentralized AI marketplace relies on the accumulation of user resources and strong network effects. The value that users and developers can obtain from the market exceeds the value they can obtain outside the market. In the early stages of the market, the focus is on accumulating high-quality models to attract and retain users, and then after establishing a high-quality model library and data barriers, turn to attracting and retaining more end users.

1.3 ZKML

The value of on-chain AI was discussed in “AI + Web3 = ?” before the topic of ZKML was widely discussed.

Without sacrificing decentralization and trustless, onchain AI has the opportunity to lead the web3 world to the “next level”. The current web3 is like the early stage of web2, and it does not yet have the ability to undertake wider applications or create greater value. Onchain AI is precisely designed to provide a transparent and trustless solution.

1.4 AI Application

In “AI + Crypto starts to talk about Web3 female-oriented games - HIM”, combined with the portfolio project “HIM”, the value brought by large models in web3 applications is analyzed; what kind of AI + crypto can bring to the product For higher returns? In addition to hard-core development of trustless LLM on the chain from infrastructure to algorithms, another direction is to downplay the impact of the black box on the reasoning process in the product, and find suitable scenarios to implement the powerful reasoning capabilities of large models.

2. Analysis of the current AI track

2.1 Computing Network: There is a lot of room for imagination but a high threshold

The general logic of the computing power network remains unchanged, but it still faces the challenge of market demand. Who would need a solution with lower efficiency and stability? Therefore, I think we need to think about the following points:

**What is decentralization for? **

If you ask the founder of a decentralized computing network now, he will probably tell you that our computing network can enhance security and attack resistance, improve transparency and trust, optimize resource utilization, better data privacy and User control, protection against censorship and interference…

These are common sense, and any web3 project can involve censorship resistance, trustlessness, privacy, etc., but my point of view is that these are not important. Think about it carefully, can’t centralized servers do better in terms of security? Decentralized computing power networks essentially do not solve the issue of privacy, and there are still many such contradictions. Therefore: **The ultimate goal of decentralizing a computing power network must be lower costs. The higher the degree of decentralization, the lower the cost of using computing power. **

Therefore, fundamentally speaking, “utilizing idle computing power” is more of a long-term narrative. Whether a decentralized computing power network can be built, I think, largely depends on whether he has figured out the following points. :

The value provided by Web3

A set of ingenious token design and the accompanying incentive/punishment mechanism are obviously powerful value add provided by the decentralized community. Compared with the traditional Internet, tokens not only serve as a transaction medium, but complement each other with smart contracts to enable protocols to implement more complex incentive and governance mechanisms. At the same time, the openness and transparency of transactions, cost reduction, and efficiency improvement all benefit from the value brought by crypto. This unique value provides more flexibility and room for innovation to incentivize contributors.

But at the same time, I also hope that this seemingly reasonable “fit” can be viewed rationally. For decentralized computing networks, the values brought by Web3 and blockchain technology are just “added value” from another perspective. Rather than a fundamental subversion, it cannot change the basic working methods of the entire network and break through the current technical bottlenecks.

In short, the value of these web3 is to enhance the appeal of the decentralized network, but will not completely change its core structure or operating model. If you want the decentralized network to truly occupy a place in the AI wave, just rely on web3’s Value is simply not enough. Therefore, as will be mentioned later, the right technology solves the right problem. The gameplay of decentralized computing power network is by no means simply to solve the problem of AI computing power shortage, but to give this long-dormant track a chance. New gameplay and ideas.

It may be like POW mining or storage mining, to monetize computing power as an asset. In this model, providers of computing power can obtain tokens as rewards by contributing their own computing resources. The appeal is that it provides a way to directly convert computing resources into economic gains, thereby incentivizing more participants to join the network. It may also be based on web3 to create a market that consumes computing power, and by financializing the upstream of computing power (such as models), it can open up demand points that can accept unstable and slower computing power.

Want to understand how to combine it with the actual needs of users. After all, the needs of users and participants are not necessarily just efficient computing power. “Making money” is always one of the most convincing motivations.

The core competitiveness of decentralized computing power network is price

If we must discuss decentralized computing power from the actual value, then the biggest imagination brought by web3 is the computing power cost that has the opportunity to be further compressed.

The higher the degree of decentralization of computing power nodes, the lower the price per unit of computing power. It can be deduced from the following directions:

- With the introduction of token, the payment to the node computing power provider is changed from cash to the native token of the protocol, which fundamentally reduces operating costs;

- Permissionless access and the strong community effect of web3 directly contribute to a market-driven cost optimization. More individual users and small enterprises can use existing hardware resources to join the network, the supply of computing power increases, and the computing power in the market increases. The supply price of power falls. In autonomous and community management modes.

- The open computing power market created by the protocol will promote price competition among computing power providers, thereby further reducing costs.

Case: ChainML

To put it simply: ChainML is a decentralized platform that provides computing power for inference and finetuning. In the short term, chainml will implement Council based on the open source AI agent framework, and through Council’s attempt (a chatbot that can be integrated into different applications), it will bring increased demand for decentralized computing networks. In the long term, chainml will be a complete AI + web3 platform (which will be analyzed in detail later), including a model market and a computing power market.

I think ChainML’s technical path planning is very reasonable. They also think clearly about the issues mentioned before. The purpose of decentralized computing power is definitely not to be on par with centralized computing power and to provide sufficient computing power supply to the AI industry. It is to gradually reduce costs to allow suitable demand parties to accept this lower-quality source of computing power. Then, in the early stages of the project, when the protocol cannot obtain a large number of decentralized computing power nodes, the focus is to find a stable and efficient source of computing power. Therefore, from the perspective of the product path, it should start with a centralized approach. , get the product links running in the early stages, and start to accumulate customers through strong bd capabilities, expand the market, and then gradually disperse the providers of centralized computing power to smaller companies with lower costs, and finally transfer the computing power nodes spread over a wide area. This is the idea of chainml divide and conquer.

From the perspective of the demand-side layout, ChainML has built an MVP of a centralized infrastructure protocol, and the design concept is portable. And we have been running this system with customers since February this year, and started using it in the production environment in April this year. Currently running on Google Cloud, but based on Kubernetes and other open source technologies, it is easily portable to other environments (AWS, Azure, Coreweave, etc.). In the future, this protocol will be gradually decentralized, dispersed to niche clouds, and finally miners who provide computing power.

2.2 AI market: There is more room for imagination

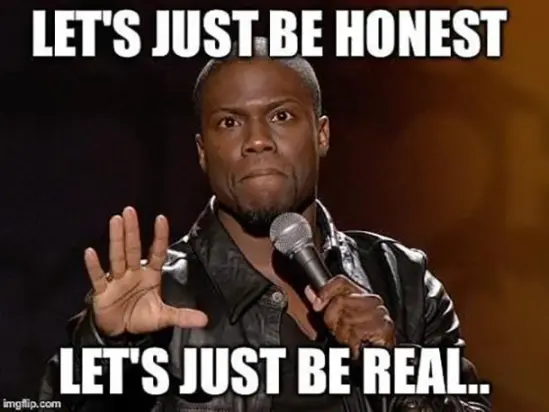

This sector is called AI markerplace, which somewhat limits the imagination. Strictly speaking, a truly imaginative “AI market” should be an intermediate platform that financializes the entire model chain, covering everything from the underlying computing power and data to the model itself and related applications. As mentioned before, the main contradiction in the early days of decentralized computing power was how to create demand, and a closed-loop market that financializes the entire AI chain has the opportunity to create such demand.

It goes something like this:

An AI market supported by web3 is based on computing power and data, attracting developers to build or fine-tune models through more valuable data, and then develop corresponding model-based applications. These applications and models are developed and used at the same time. Creates demand for computing power. Incentivized by tokens and communities, bounty-based real-time data collection tasks or regular incentives for data contribution have the opportunity to expand and expand the unique advantages of the data layer in this market. At the same time, the popularity of applications also returns more valuable data to the data layer.

Community

In addition to the value brought by tokens mentioned earlier, the community is undoubtedly one of the biggest gains brought by web3 and is the core driving force for the development of the platform. The support of the community and tokens gives the quality of contributors and contributed content a chance to surpass that of centralized institutions. For example, the achievement of data diversity is an advantage of this type of platform, which is crucial for building accurate and unbiased AI models. At the same time, it is also the bottleneck of the current data direction.

I think the core of the entire platform lies in the model. We realized very early on that the success of an AI marketplace depends on whether there are high-quality models, and what incentives do developers have to provide models on a decentralized platform? But we also seem to have forgotten to think about a problem. The infrastructure is not as strong as traditional platforms, the developer community is not as mature as traditional platforms, and reputation does not have the first-mover advantage of traditional platforms. Compared with traditional AI platforms, they have a huge user base and mature Infrastructure and web3 projects can only overtake in corners.

The answer may lie in the financialization of AI models**

- Models can be regarded as a commodity. Treating AI models as investable assets may be an interesting innovation in Web3 and decentralized markets. This kind of market allows users to directly participate in and benefit from the value creation process of AI models. This mechanism also encourages the pursuit of higher-quality models and community contributions, because user benefits are directly related to the performance and application effects of the model;

- Users can invest by pledging models. The introduction of a revenue sharing mechanism on the one hand encourages users to choose and support potential models, and provides economic incentives for model developers to create better models. On the other hand, for stakers, the most intuitive criterion for judging models (especially for image generation models) is to conduct multiple actual measurements. This provides a demand for the platform’s decentralized computing power, which may also be One of the solutions mentioned before is “Who would want to use less efficient and more unstable computing power?”

**2.3 Onchain AI: OPML overtaking in corners? **

ZKML: Both demand and supply are in trouble

What is certain is that on-chain AI must be a direction full of imagination and worthy of in-depth study. Breakthroughs in on-chain AI can bring unprecedented value to web3. But at the same time, ZKML’s extremely high academic threshold and requirements for underlying infrastructure are indeed not suitable for most start-up companies. Most projects do not necessarily need the support of a trustless LLM to achieve breakthroughs in their own value.

But not all AI models need to be moved to the chain to use ZK to make trustless. Just like most people don’t care how chatbot infers the query and gives results, nor does they care whether the stable diffusion used is a certain version of the model architecture or specific parameter settings. In most scenarios, most users focus on whether the model can give a satisfactory output, rather than whether the inference process is trustless or transparent.

If proving does not bring a hundredfold overhead or higher reasoning costs, maybe ZKML still has the power to fight, but in the face of high on-chain reasoning costs and higher costs, any demand side has reason to question the need for Onchain AI. sex.

Looking from the demand side

What users care about is whether the results given by the model make sense. As long as the results are reasonable, the trustless brought by ZKML can be said to be worthless; just imagine one of the scenarios:

- If a neural network-based trading robot brings users a hundredfold profit every cycle, who will question whether the algorithm is centralized or verifiable? *Similarly, if this trading bot starts to lose money to users, the project team should think more about how to improve the capabilities of the model instead of spending energy and capital on making the model verifiable. This is the contradiction in ZKML’s requirements. In other words, the verifiability of the model does not fundamentally solve people’s doubts about AI in many scenarios, which is a bit contradictory.

Looking from the supply side

There is still a long way to go to develop proof that can support large prediction models. Judging from the current attempts of leading projects, it is almost impossible to see the day when large models will be put on the chain.

Referring to our previous article about ZKML, technically the goal of ZKML is to convert neural networks into ZK circuits. The difficulty lies in:

- ZK circuit does not support floating point numbers;

- Large-scale neural networks are difficult to convert.

Judging from the current progress:

- The latest ZKML library supports some simple neural network ZKization, and is said to be able to chain basic linear regression models. But there are very few existing demos.

- Theoretically, it can support a maximum of ~**100M parameters, but this is only theoretical. **

The development progress of ZKML has not met expectations. Judging from the progress of the current leading projects modulus lab and EZKL’s proving, some simple models can be converted into ZK circuits for model up-chaining or inference proof. On the chain. But this is far from reaching the value of ZKML, and there seems to be no core motivation to break through the technical bottleneck. A track that seriously lacks demand is fundamentally unable to gain the attention of the academic community, which means that more It is difficult to make a good POC to attract/satisfy the remaining demand and this may also be the death spiral that kills ZKML.

**OPML: Transition or endgame? **

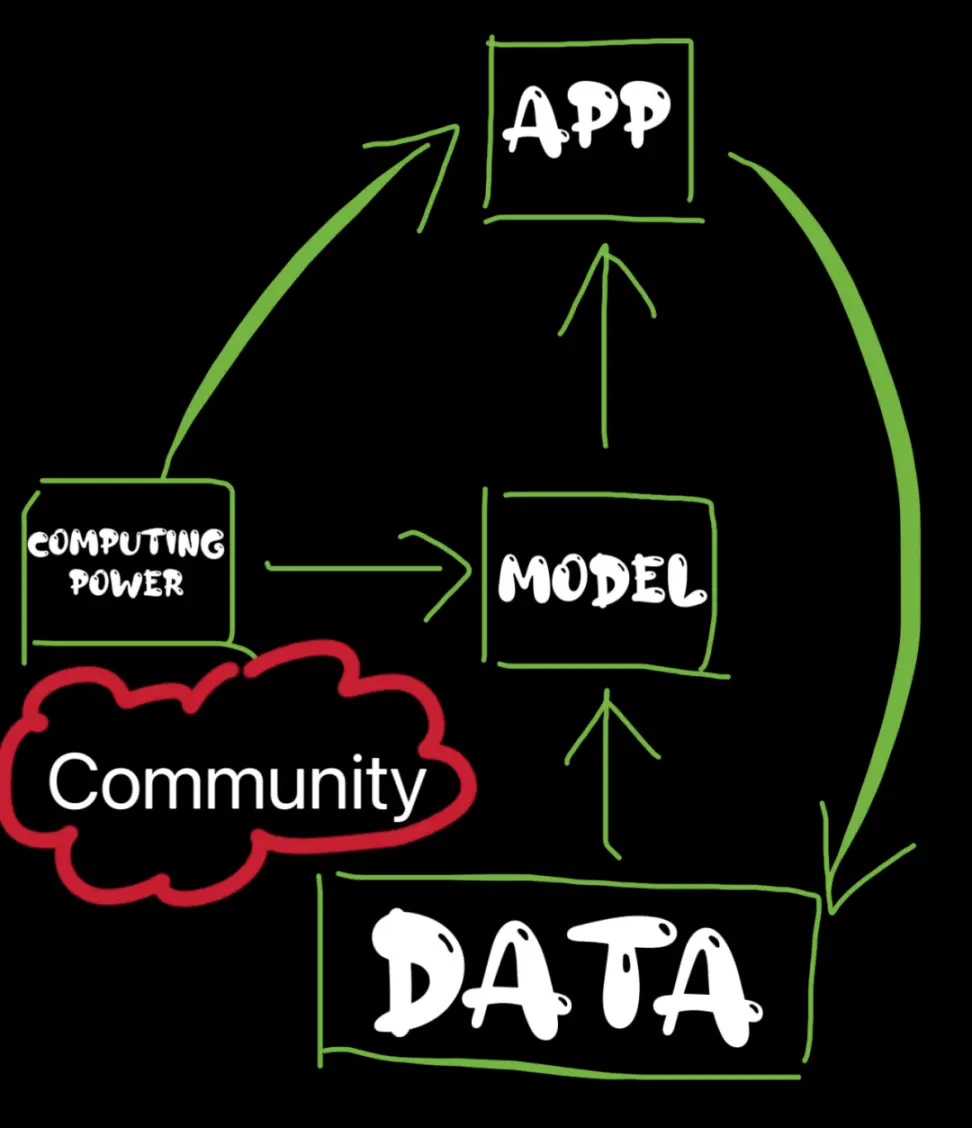

The difference between OPML and ZKML is that ZKML proves the complete reasoning process, while OPML re-executes part of the reasoning process when the reasoning is challenged. Obviously, the biggest problem that OPML solves is that the cost/overhead is too high. This is a very pragmatic optimization.

As the founder of OPML, the HyperOracle team gave the architecture and advanced process from one-phase to multi-phase opML in “opML is All You Need: Run a 13B ML Model in Ethereum”:

- Build a virtual machine for off-chain execution and on-chain verification to ensure equivalence between the offline VM and the VM implemented in the on-chain smart contract.

- In order to ensure the reasoning efficiency of the AI model in the VM, a specially designed lightweight DNN library is implemented (which does not rely on popular machine learning frameworks like Tensorflow or PyTorch). At the same time, the team also provides a library that can combine Tensorflow and PyTorch models. Script converted to this lightweight library.

- Compile the AI model inference code into VM program instructions through cross-compilation.

- VM image is managed through Merkle tree. Only the Merkle root representing the VM state will be uploaded to the on-chain smart contract.

But obviously this design has a key flaw, that is, all calculations must be performed within the virtual machine, which hinders the use of GPU/TPU acceleration and parallel processing, limiting efficiency. Therefore multi-phase opML is introduced.

- Only in the final phase, the calculation is performed in the VM.

- In other stages, the calculation of state transitions occurs in the native environment, thus utilizing the capabilities of CPU, GPU, and TPU, and supporting parallel processing. This approach reduces dependence on VM, significantly improves execution performance, and reaches a level comparable to the native environment.

Reference: __Ui5I9gFOy7-da_jI1lgEqtnzSIKcwuBIrk-6YM0Y

LET’S BE REAL

Some people believe that OPML is a transition before realizing comprehensive ZKML, but it is more realistic to think of it as a trade-off of Onchain AI based on cost structure and implementation expectations. Perhaps the day when ZKML is fully realized will never come, at least I have a pessimistic attitude towards this. Then Onchain AI’s hype will eventually have to face the most realistic implementation and cost. Then OPML may be the best practice of Onchain AI. Just like the ecology of OP and ZK has never been a substitute relationship. .

Although, don’t forget that the shortcomings of the previous requirements still exist. OPML’s optimization based on cost and efficiency does not fundamentally solve “Since users care more about the rationality of the results, why should AI be moved to the chain to make it trustless?” Problems that are completely opposite to each other, transparency, ownership, and trustlessness. These buffs are indeed very flashy when combined, but do users really care? In contrast, the value should be reflected in the model’s reasoning ability.

I think this cost optimization is technically an innovative and solid attempt, but in terms of value it’s more like a crappy roundabout;

Maybe the Onchain AI track itself is just looking for nails with a hammer, but that’s right. The development of an early industry requires continuous exploration of innovative combinations of cross-domain technologies, and finding the best fit through continuous running-in. Wrong It has never been a collision and trial of technology, but a blind following of trends that lacks independent thinking.

2.4 Application Layer: 99% of Stitch Monsters

I have to say that AI’s attempts at the web3 application layer are indeed coming one after another. It seems that everyone is FOMO, but 99% of the integrations should just stay at integrations. There is no need to rely on gpt’s reasoning ability to map the value of the project itself.

From the application layer perspective, there are roughly two ways out:

- Use the power of AI to improve user experience and improve development efficiency: In this case, AI will not be the core highlight. It is more often a behind-the-scenes worker who contributes silently, and is even indifferent to users; for example, web3 The team of Game HIM is very smart about the combination of game content, AI, and crypto. They have grasped the points that are highly compatible and can generate the most value. On the one hand, they use AI as a production value tool to improve development efficiency and quality. On the other hand, Improve the user’s gaming experience through AI’s reasoning capabilities. AI and crypto do bring very important value, but fundamentally they still use the means of tooling technology. The real advantage and core of the project is still the team’s ability to develop games.

- Combine with AI marketplace to become an important user-oriented part of the entire ecosystem.

3. Finally…

If there is anything that really needs to be emphasized or summarized: AI is still one of the tracks that deserves the most attention and has the greatest opportunities in web3, and this general logic will definitely not change;

But I think what deserves the most attention is the gameplay of the AI marketplace. Fundamentally, the design of this platform or infra is in line with the needs of value creation and satisfies the interests of all parties. From a macro perspective, it creates products beyond the model or computing power itself. It is attractive enough to have a unique way of capturing value in web3. At the same time, it also allows users to directly participate in the wave of AI in a unique way.

Maybe in three months I will overturn my current thoughts, so:

The above are just my very real views on this track, and they really do not constitute any investment advice!

Reference

opML is All You Need: Run a 13B ML Model in Ethereum: __Ui5I9gFOy7-da_jI1lgEqtnzSIKcwuBIrk-6YM0Y