GPT-5.4 Mini Launches! Execution Speed Doubles, Small Models More Practical

OpenAI announced on March 18th the release of GPT-5.4 Mini and GPT-5.4 Nano, two lightweight models designed for high-capacity AI workloads, iterating less than two weeks after the launch of the GPT-5.4 flagship version. GPT-5.4 Mini is twice as fast as the previous GPT-5 Mini, while GPT-5.4 Nano is optimized for real-time conversations at a lower cost.

Core Logic of Small Models: Accuracy Is Not Always the Bottleneck

OpenAI positions GPT-5.4 Mini and Nano as “the most powerful small models to date,” but they are not just scaled-down versions of the flagship. They are designed based on different priorities: when the actual bottleneck is speed and cost rather than reasoning depth, smaller models often prove more practical.

For example, in customer service: if answering 200 fixed questions daily, the marginal benefit of doctoral-level reasoning is nearly zero, and the key to scaling the system is response times under one second and response costs of just a fraction of a cent per reply.

A relatively efficient workflow currently involves: the flagship model (like GPT-5.4) handles task planning and coordination, while Mini or Nano processes large volumes of repetitive sub-tasks—such as codebase searches, document reading, or form processing. Jerry Ma, Vice President of Technology at Perplexity, stated after testing: “Mini models have strong reasoning capabilities, and Nano models respond quickly and efficiently, suitable for real-time conversation workflows.”

Benchmark Data: Mini Has Surpassed Human Computer Operation Standards

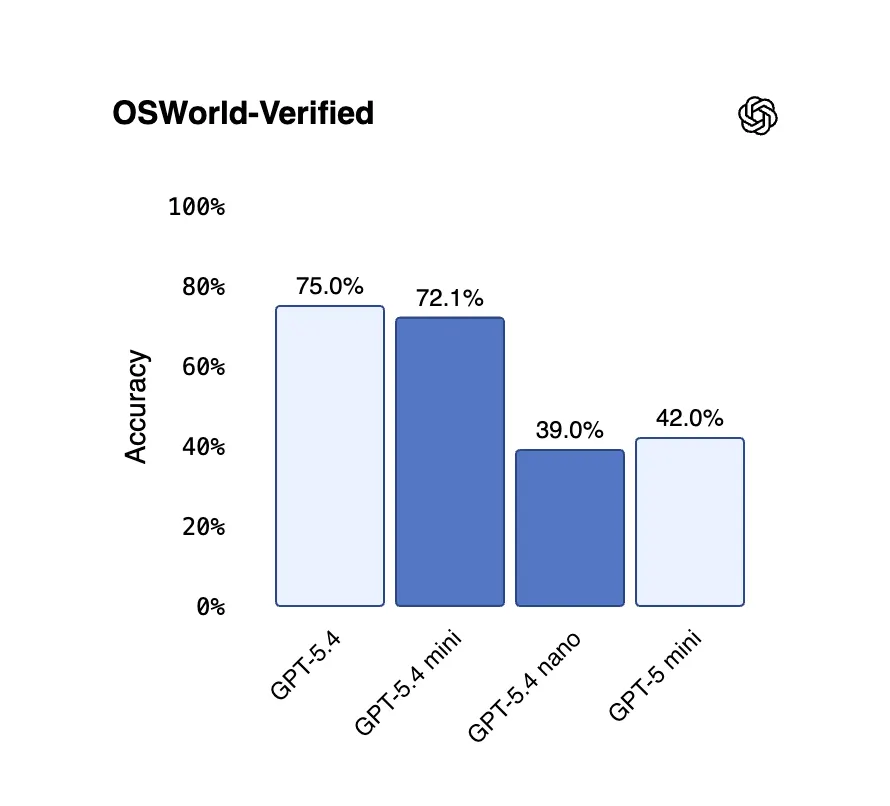

(Source: OpenAI)

(Source: OpenAI)

Based on public benchmark data, GPT-5.4 Mini’s performance is already quite close to the flagship:

SWE-Bench Pro (assessing ability to fix real GitHub code issues): GPT-5.4 Mini scored 54.4%; older GPT-5 Mini scored 45.7%; GPT-5.4 flagship scored 57.7%.

OSWorld-Verified (evaluating actual desktop operations via screenshots): Mini scored 72.1%; GPT-5.4 flagship scored 75.0%; human benchmark is 72.4%—Mini has surpassed human standards.

GPT-5.4 Nano: SWE-Bench Pro 52.4%, OSWorld-Verified 39.0%, lower than Mini but still a significant improvement over previous Nano series.

This data indicates that in scenarios requiring desktop operations or code handling, Mini performs nearly on par with the flagship; Nano, while less accurate, offers unique cost advantages in high real-time demand scenarios.

Pricing Structure and Availability: Developer vs. General Users

API Pricing: GPT-5.4 Mini costs $0.75 per million input tokens and $4.50 per million output tokens; GPT-5.4 Nano costs $0.20 per million input tokens and $1.25 per million output tokens—input pricing for Nano is about a quarter of Mini.

User Accessibility: GPT-5.4 Mini is available to ChatGPT Free and ChatGPT Plus users via the “+” menu under the “Thinking” option; when paid users reach their GPT-5.4 usage limit, the system automatically switches to Mini. GPT-5.4 Nano is currently only accessible via API, targeting developers and not directly available to consumers.

Frequently Asked Questions

What are the main differences between GPT-5.4 Mini and GPT-5.4 flagship?

GPT-5.4 Mini is over twice as fast as the previous GPT-5 Mini, scoring 72.1% on OSWorld-Verified, surpassing the human benchmark of 72.4%, and just slightly below the 75.0% of the flagship. The main differences lie in reasoning depth and handling complex tasks, while Mini’s speed and cost advantages make it more practical for large-scale repetitive tasks.

What are the best use cases for GPT-5.4 Nano?

GPT-5.4 Nano is positioned as a developer tool, ideal for lightweight real-time conversation workflows such as instant customer service or large-scale daily automation queries. Its input cost of only $0.20 per million tokens makes large-scale deployment economically feasible for startups.

How to use GPT-5.4 Mini in ChatGPT?

GPT-5.4 Mini is currently available to ChatGPT Free and Plus users via the “+” menu under the “Thinking” option. Paid users will automatically switch to Mini when reaching their GPT-5.4 usage limits.